Mip-NeRF RGB-D: Depth-Assisted Fast Neural Radiance Fields

An extension of Mip-NeRF that integrates depth supervision from RGB-D sensors to accelerate training and improve geometric accuracy in neural radiance field reconstruction.

Abstract

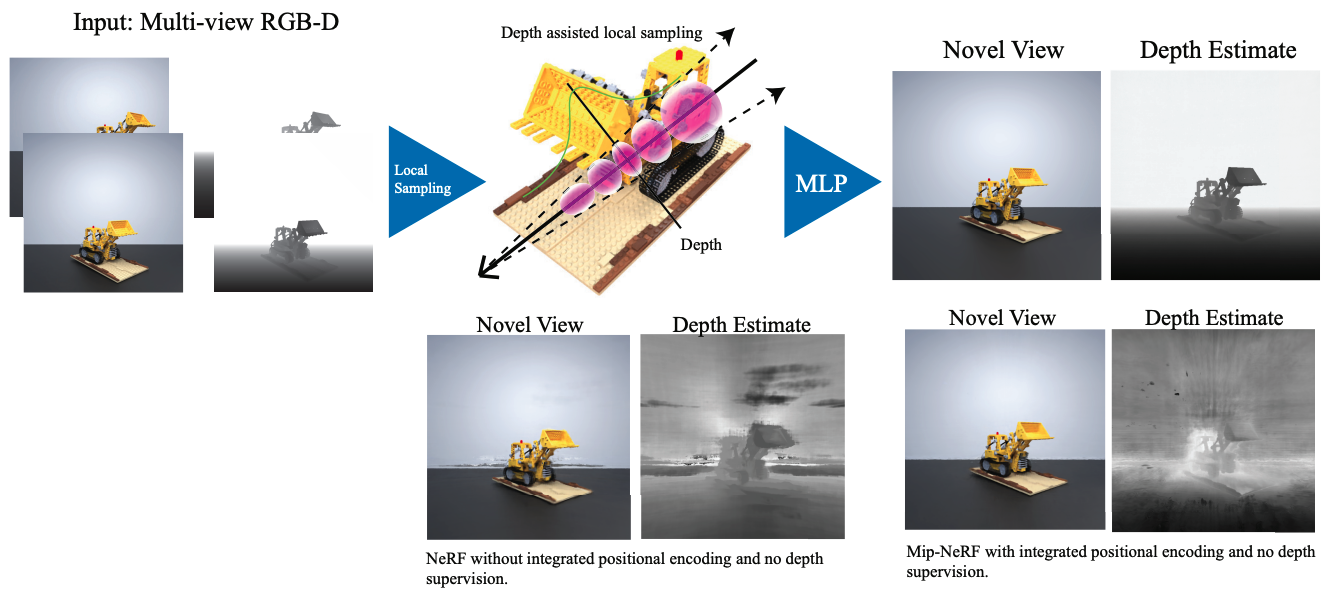

Neural scene representations, such as Neural Radiance Fields (NeRF), are based on training a multilayer perceptron (MLP) using a set of color images with known poses. An increasing number of devices now produce RGB-D(color + depth) information, which has been shown to be very important for a wide range of tasks. Therefore, the aim of this paper is to investigate what improvements can be made to these promising implicit representations by incorporating depth information with the color images. In particular, the recently proposed Mip-NeRF approach, which uses conical frustums instead of rays for volume rendering, allows one to account for the varying area of a pixel with distance from the camera center. The proposed method additionally models depth uncertainty. This allows to address major limitations of NeRF-based approaches including improving the accuracy of geometry, reduced artifacts, faster training time, and shortened prediction time. Experiments are performed on well-known benchmark scenes, and comparisons show improved accuracy in scene geometry and photometric reconstruction, while reducing the training time by 3 - 5 times.

Download paper here Download data here Request access to data by sending an email to arnabdey0503@gmail.com

Recommended citation:

@ARTICLE{Dey2022B02,

author={Dey,A. and Ahmine,Y. and Comport,A.I.},

title={{Mip-NeRF RGB-D: Depth Assisted Fast Neural Radiance Fields}},

journal={Journal of WSCG},

year={2022},

volume={30},

pages={34-43},

doi={10.24132/JWSCG.2022.5},

publisher={Union Agency, Science Press},

issn={01689274},

abbrev_source_title={JWSCG},

document_type={Article},

source={Scopus},

}Citation

Dey, A. and Ahmine, Y. and Comport, A.I., Mip-NeRF RGB-D: Depth-Assisted Fast Neural Radiance Fields, Journal of WSCG, Volume 30 pages 34-43, DOI:10.24132/JWSCG.2022.5.